Courtney Shealy: [00:00:00] Appreciate you all joining. Hopefully you’ve been getting a lot out of the sessions preceding this one. There are some others that are gonna go into even greater detail to some of the things I’m gonna talk about today in terms of helping you address the white elephant in the room when it comes to conversational data.

For those of you that have CX programs that may not have ventured into that, those of you that have included conversational data into your storyline. Maybe today is gonna be the day where you can start to get more out of it, and we talk through how to start doing that, and I can point you to some other sessions that are tomorrow, later today and tomorrow.

I think actually that will give you some best practices of working with conversational data, but then also ai. We’ve invested a lot in overlaying AI into our story, making sure that you all get the most out of your data. Through Medallia. So part of what we’re gonna talk about today is connecting you to that, but then also brainstorming with all of you all.

This is a workshop. We do wanna [00:01:00] get your feedback. We wanna hear from you of kinda your own challenges and maybe others have something to share. So this is. Part me talking through and trying to connect you to things part. Trying to get everybody to collaborate and talk through a few things. Yep. You’re all friends.

You’re all in the same industry. No big deal. This is easy. Just ask the questions of this is what’s being asked to be. So today I’m hoping to just engage everyone from that perspective, but then also try to take some of the things from main stage this morning when you see some of the new features and function functionality that’s being applied to Medallia.

Connect you to it so that there’s a little less fear of using it and or you can start to adapt, trust, et cetera. I’ll kick off just by saying that CX isn’t missing data, right? It’s missing the conversation. You’ve already built programs, many of you that have invested in a traditional storyline of tracking loyalty, seeking feedback, when.

You want it, it’s either super open, open-ended and you have no [00:02:00] idea what’s going to be in there, or it’s so tailored and structured that you miss a lot of the real conversation that someone needs to have. What’s changed in terms of when you start thinking about the data, what’s changed is the speed, the agility, and the expectations of consumers and how they’re giving you the feedback.

So the form factor. Opening, broadening your aperture of what you’re listening to is super, super critical. So we’re here to overlay some of our new tech to conversations. Try to help open that door, open your mind to that, but then also ensure that we are meeting the mark of some of your own questions, because what I would like to do, my name is Courtney Sheeley. I run all of global pre-sales and my pre-sales function, right? We’re organizing ourselves this year to not only be solution engineers, where we’re gonna be in the trenches thinking through a day in the life of how you might [00:03:00] use Medallia to your benefit, how you might connect.

Medallia to partners like the one, the session I had earlier with ada, how you might connect Medallia to other actioning systems of record to make sure that you’re getting the return that you need, number one, but number two, it’s also thinking through, are we innovating with you, right? That is super important to me and exciting that my team shows up to say not this is just what’s best practice, but this is what’s best practice right now.

And we’re gonna continue to innovate to chase the outcomes and the challenges that you have. So it shouldn’t just be lead me, it’s tell me what your next question is, and I’m gonna make sure we design for the next three or four questions post, right? So we wanna make sure this is purposeful. We wanna make sure that we are looking at how fast things are changing so that we apply the technology in the right way.

Technology is just technology, but we need to make sure that those that consume [00:04:00] it. Those that use it and the next steps from it are the right ones. So my first question to you all is you start thinking about the existing programs. You’re either running, you’re supporting, you’re a part of, what questions are you being asked most frequently?

Audience Participant 1: The questions I get asked often are. Where we failed, where we did not deliver the service that we were expected to deliver and what moment exactly we failed because the service doesn’t give us that.

Courtney Shealy: Correct. In my session earlier, I was talking through that a former customer of mine had said, if you have to call me, I’ve already failed you.

So you didn’t, the survey you gave me at my after purchase, whatever wasn’t the moment that you failed me. You failed me once I got the sofa home, and there was a problem with the sofa and the delivery. It was in that moment of the journey that the failure happened. And so I agree that. A lot of times when we’re asking for feedback which is still important, we still want the feedback.

At the end of this session, there’s gonna be a QR code where I want your feedback to say, [00:05:00] I would’ve preferred to see this. I need somebody to walk me through X, Y, Z, right? So not knowing where the failure happens is because you’re relying on a single signal to answer the story for you, and the reality is you need to have multiple signals coming in that at least give you the footprint of the entire journey.

So that’s 0.1 of thinking that through. But that’s a common question is to say, number one, where is failure happening? What’s the frequency of failure happening? And is that failure happening to a specific product subset of customers, right? That failure may not be the same for everyone.

And then when, if to, to your question about how do I increase feedback, response rates, it could be what’s the form factor and when am I asking them the question? Because I am one of those people that it’s not in the immediacy of the transaction that I’m gonna answer a survey, it’s gonna be later, let me time to sit, let me think about it.

So sometimes timing of when you ask, but then if you’re online, [00:06:00] right at a digital, you see someone struggling, you wanna catch them right then and there because they’re not able to execute. Or you wanna get feedback right away. So these strategies, you have to think of your own little mind map. Of decision trees that you wanna make sure you have the basis covered for collecting feedback, and then you’re also including other signals because the aggregate of that is going to give you a better connected experience with the customer.

What’s changing the most in CX right now? That the questions are coming faster. They are moving faster than the actual programs themselves. And so one of the things that we wanna start to solution with you all on in these programs and the way you’re using the Dahlia, is to start thinking about how do I adapt my program faster?

What do I need to include and how do you partner with me to help execute on that? You heard in multiple sessions that there’s the technology, there’s the people, but ultimately everything starts with the outcome that matters, right? Knowing that you’re [00:07:00] very clear on that and partnering with whether it’s us, if there’s a services partner that we work that’s implementing Medallia for you, right?

Making sure that you paint that target bright. Loud wide to say these outcomes are what matter to us right now, quarter to quarter, month to month. However, that is the more focus and the more connected you are to the outcome goal you have, the better design you get, the better optionality and the decision tree that you come in your program.

Because you can always trace back to this falls in this bucket. I’m trying to grow revenue. If I’m trying to grow revenue, I need to watch my shopping experience. I need to watch how my agents encourage or upsell on a phone call. I need to think through my transactional pieces in my chat component.

I need to make sure that there aren’t any blockers there. I’m not creating frustration. Where am I? Preventing that outcome from happening. So these are the things that when we start thinking through, if the questions are coming faster, [00:08:00] there’s data coming in from anywhere and everywhere, how am I gonna manage looking through all of that quickly and what’s the difficulty, what’s the kind of, what’s blocking me from doing what I need to do?

We wanna make sure that your data is valuable no matter how much data you have. Number one, the variability of when that data comes in. A lot of healthcare companies here in the US in particular, that onboarding or the January to March timeframe is when they get. Probably 90% of their phone calls a year is because your plan just changed.

I got all the new rules that I gotta follow, et cetera, and making sure that I follow my insurance plans. So knowing that I can operationalize that data is super, super important. There’s two other sessions that are gonna go into detail a little bit later about that. Lance stalls and Shelby Patro and a few others are gonna be conducting where it’s, how do you make the most out of those conversations?

It’s called contact center data. High volume, high value. But sometimes it’s daunting, [00:09:00] right? It’s a little scary because it is so high volume. What’s really the truth? What questions should I ask a bit? Because my role in the organization might be product focused. My role might be service oriented.

My mine might be digital. So part of what. Deploying Medallia means today, right? Is taking in these signals, but then also organizing yourselves to answer those questions and make sure your frontline leaders get those answers tailored to them specifically. So that means deploying text analytics properly.

That means deploying and overlaying AI to answer questions fast and quickly. So you’re gonna see some of that example today and why you shouldn’t be as scared of it as some may think. The key is to get consistency in what you’re looking at. Another quick question. How do you feel your role specifically is changing because of ai?

Audience Participant 2: It’s definitely a good thing. It’s just about we need to know more of how to optimize [00:10:00] AI in the, in our functions.

Courtney Shealy: Okay. That’s a great how to optimize. Okay. Do you have another comment? Somebody or somebody else?

Audience Participant 3: Sorry. I was just gonna say, the executives now assume that this technology is just so intuitive and easy to use, that they expect us to be, yesterday have the answers already in the reports that they’re looking for.

And not only that, but be able to then analyze that and. Then drive some type of result or change with that automatically. But we’re still at this infancy stage where we’re trying to understand, like you just said right now is it valid? Is, the prompt that I gave it actually looking for what I’m looking for.

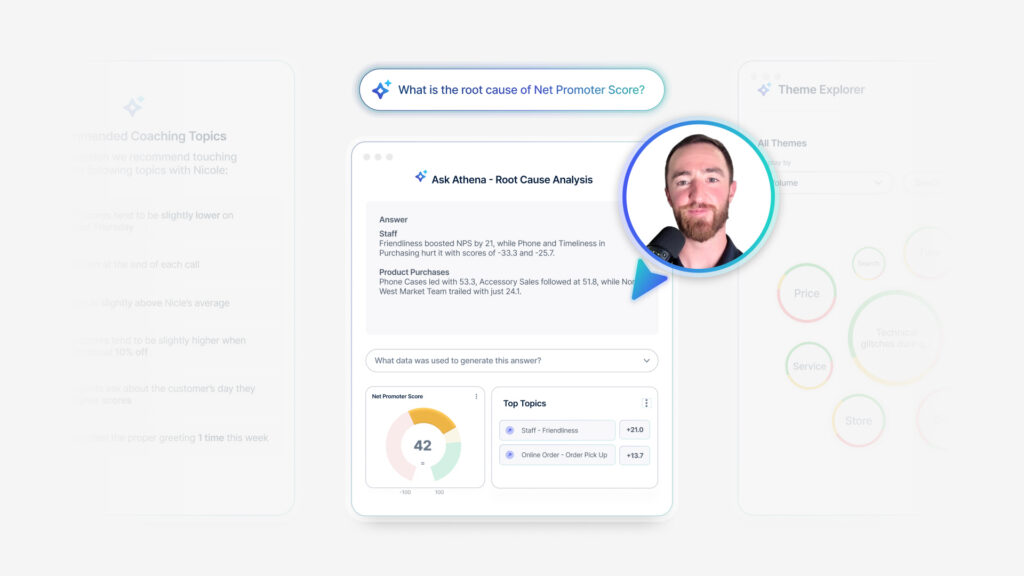

Courtney Shealy: Excellent point. That’s actually one of my favorite examples, the prompt, because I. Did an internal exercise for us where I wanted to understand where are we providing value to our customers? Most often across industries. ’cause oftentimes when, if I’m talking to a large re big box retailer, for example, they wanna know what.

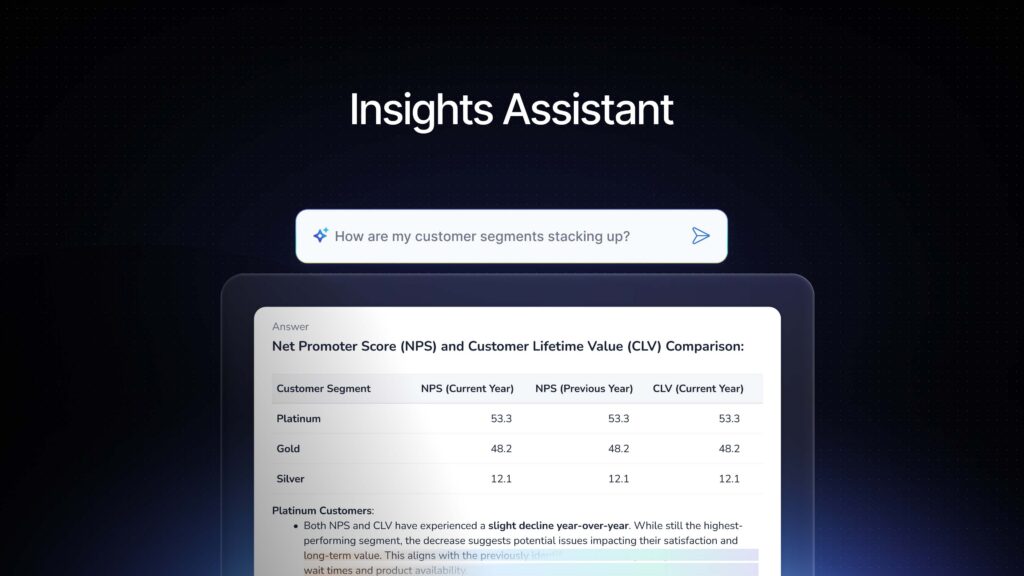

Financial institutions are doing differently. ’cause just ’cause you’re in different [00:11:00] verticals doesn’t mean you don’t have the same challenges and same questions. And one of the examples I gave when I load in all this data, I was like, okay, I loaded it in and I started prompting and asking questions. Our own internal AI is to say, what themes do I see?

Where are people winning and where are customers most excited? What have they tried? What have they experimented with? Because I want to arm my team with that so I can share it with you all. And so my prompts delivered the story for me. I then shared it with Sid. Are we shocked that Sid probably had very different questions than Courtney, even though I’ve worked for the man for 15 years.

I will say that he inspires me every day in terms of how fast he thinks ahead of where this needs to go. And I will say he starts looking at it from a completely different perspective because of the conversations he has with certain C-Suite people. And so I think that is one of the things the prompts can steer you in many different directions.

It didn’t mean my data was wrong. [00:12:00] I was, I asked it a very specific question. He asked it a different question and he was gonna do something very different with it. So when you start thinking about, do you want people able to free spirited look at the data, yes, probably the answer is true in some cases.

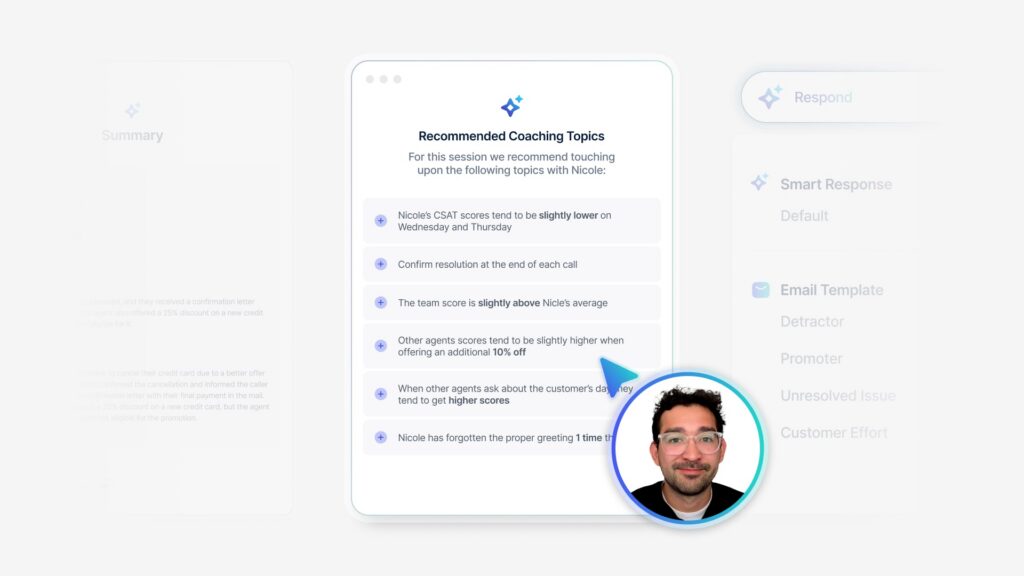

But in other cases, you wanna keep them focused eye on the prize of what is their job, what can they influence, what can they change? So I want the prompts. And this is why the Frontline Ready AI for medal has me excited is because I know that the prompts are curated to deliver consistency in the answer.

It’s defensible. It’s backed by the data that’s there. No one can game it and say, all right, just gimme 10% of that so that I can, it looks better for me. No, the data’s the data. It’s there. And so it’s just start to garner this trust of understanding what I’m seeing so that I can then. Make a defensible act, make the next change that I need to make.

And if I ever had to be challenged on, I don’t think that’s the right approach, Courtney. I don’t think it’s the right story. I don’t think it’s the right [00:13:00] thing you do. I can always go back to my main dashboard where I asked that prompt and I can have all the data right there to say, this is what our customers have said up to this point.

As an example, it’s rare that I’ve ever been, as much as I’ve been in technology, an early adopter. I always let my husband go first. It’s let me know how the upgrade went, before I upgrade my phone. ’cause I don’t wanna be off on a business trip. And all of a sudden the latest iOS is filled with bugs, whatever it was, right?

For example. So I always let him go first. I’m like, I don’t want the, I want the new phone to get the kinks out before. Before I get it. I’m willing to wait one of those people because I’m more pragmatic and the older I get though now with this new AI stuff, I’ve gotten very curious, very fast. Very creative, much to the chagrin of some of my brand people here, but it’s still been fun to think about how do I materialize our value and how do I share that and show it?

So when we start thinking about, putting AI in the proper context, here’s where I wanna break it down for you a little bit, and I’m gonna give Joanna Mo, [00:14:00] who is one of our VPs, a product manager, props for giving me this idea. She said something to me, which then allowed me to spiral and really think about how I might talk through this, but.

What’s actually changed about ai? ’cause it’s been out there, right? I’ve been in text analytics for 16 plus years now, and it started out as machine learning. And machine learning enabled NLP, natural language processing language start break down your unstructured data. Then NLP became NLU, right? So we start to say, now apply emotions and tag not just sentiment, not just score for 10 sentiment, but think about key.

Emotions that I might, let me find the all the frustrated, confused customers, et cetera. Then we move to natural language generation, which is ai essentially saying, I’m going to take cues from what you asked me. Look at your data and I’m gonna fill in the blanks. I’m gonna illustrate how this works. And that’s how natural language generation has become the AI that we’re all used to seeing today.

It’s all the rage because they’ve been playing with it and playing with us in our everyday lives, and [00:15:00] we didn’t even realize it in a lot of cases. Amazon. It may not be your fashion choice here, this sweater, not saying it would be, but I’m gonna give you an example. Everybody shopped on Amazon, right? So this is Amazon Prime.

You see the product in the middle there, but on the left side, right? You start to see Rufuss. This is their AI agent. And by agent, I mean it’s a blend of AI and machine learning and a little old school thing. Those of you, if you’ve ever done Mad Libs. Right fill, gimme your non verb adjective, and I’m gonna fill in the blank.

I’m gonna curate a sentence and apply things based on what I see. So you look at it, you think it’s ai. They call it ai, but it’s a little bit of a blend. Medal has been doing the same thing. Little bit of blend of machine learning. A little bit of blend of picking up on keys. Topics that we’ve tagged, that we’ve enriched, organizing it for meaning, and then ta-da.

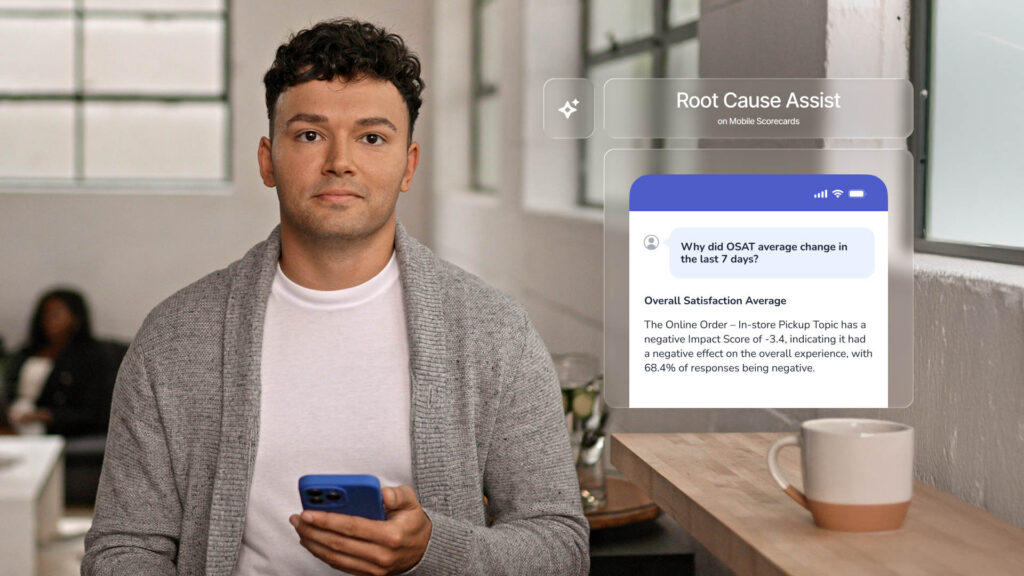

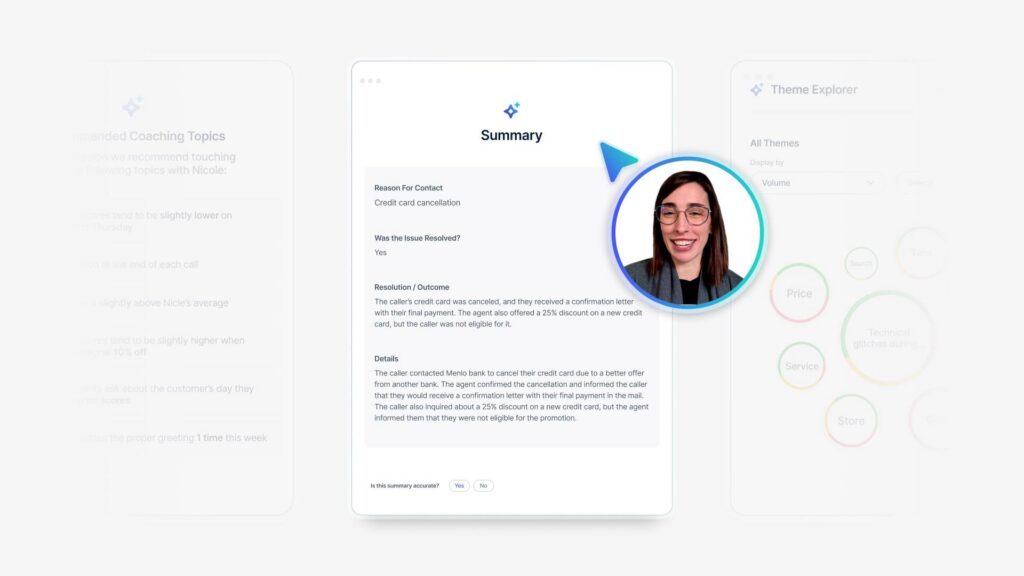

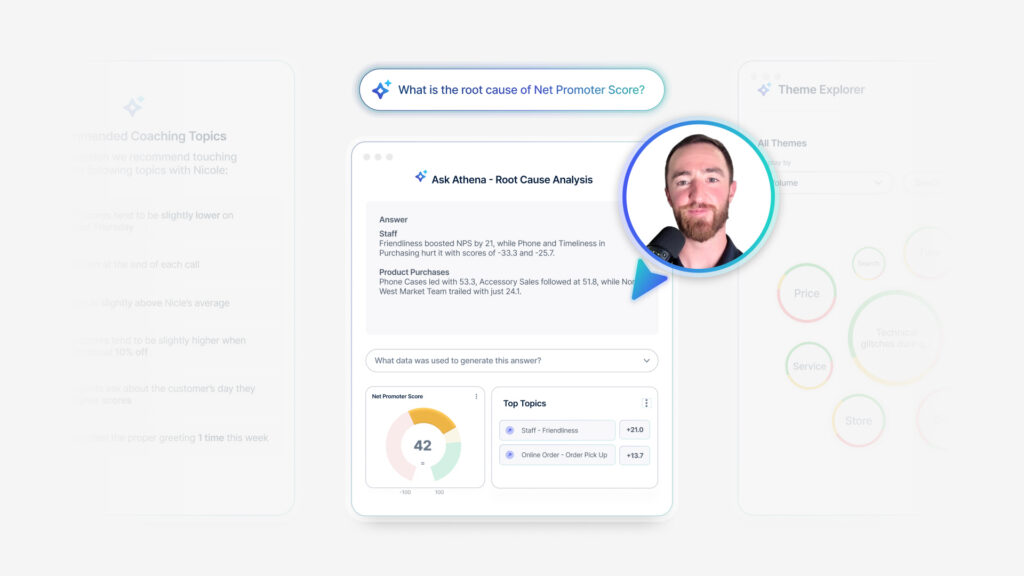

Now we have topic summaries. We have the ability to [00:16:00] summarize a call. We have the ability to do root cause assist, where I’m saying, based on this data, tell me the who, what, when, where, why. That data point going up or down. So on the left the general sentiment there is a summary of customer reviews.

You can still go look and read the reviews, but remember when you had to go look at them and what’d you do? You first went to the one stars, didn’t you? I do. I wanna see what people complained about first. See? Yep. You shopped the way I do. I was like, hey, lemme find the negative. Is it itchy? If I see one itchy, I’m out.

I’m not gonna do it. I’m not gonna buy it. But even if it has a thousand views. I just need the one to tell me it’s itchy and I won’t even entertain it. So the general sentiment is it, but then the tags down here with the little check marks, see tuck, their quality, the fit. The color, the green check mark, right?

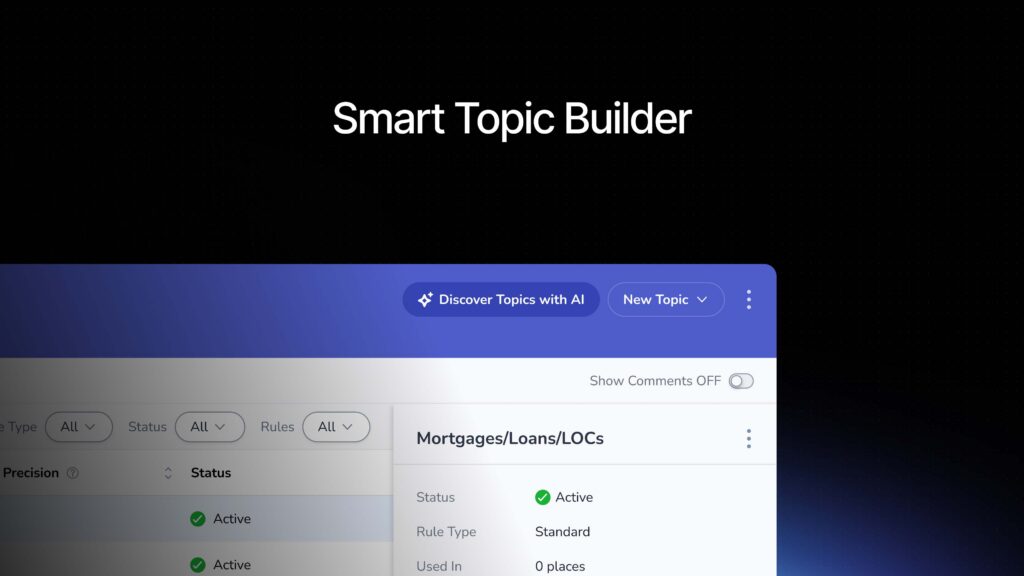

Versus the dashed line. The green check mark is sentiment means it’s a positive comment, right? So this is the enriched tagging that they’re doing. [00:17:00] So our topic tagging, we. Organizing for purpose. This is saying give me feedback on the quality of the actual garment itself. Whereas if I really wanted this to be about style or cost or price or something to that nature, I might have different tags that matter and I wanna bring those up.

So that’s why different topic sets matter to the questions you wanna ask. It matters to training the AI to get you the information you want, right? These are very easy things and it actually kinda shortens. Your approach to building your programs and then the bottom, like the FAQs, right? So then it’s giving you the prescripted prompts, right?

Here are some things I can help you with. Does it shrink? What it’s doing is it’s looking at all of those customer reviews and looking at common questions it sees, and the more frequency that question is seen. Then it gets put here. This is everyday interaction with ai machine learning that is oh, this is familiar.

I get it. It’s understandable. [00:18:00] So think about now applying that to your own data. So basically the signal from the outside world is this should be the expectation. This Amazon’s telling us this right. Summarization becomes the interface. ’cause I’m, that’s what I’m engaging with. I’m not engaging with a ton of charts in CO all the time anymore.

I’m starting to evolve. I want the charts to help me illustrate and tell a story when I’m standing up here because people can see, and I’m one of those people that needs to see the big animal picture. But the summary behind it and the meaning behind it is what’s I want the quick. Elevator pitch.

I can remember working with some more junior people on my team at one point and oh, I found all these great insights from my customer. It’s amazing. Let me show you the dashboard I put together. And they come into my office and I was like, okay. And they open up their laptop and then I shut their laptop.

I was like, tell me about the data tip. If it was that exciting, you should already know. You shouldn’t have to click through. I assume someone in the back laughing, ’cause I probably did this to you, but. [00:19:00] This is what summarization and the sound bites that you’re looking for so that you can talk to your own data, you can engage with your data, and you can then ultimately, if someone challenges you, you have the deep insights behind it.

The summarization now empowers you to speak faster, understand faster than if you want to prompt and engage it to say, okay is that still true for my highest tiered customers? Is that finding true for my early adopters? The answer might not be the same. It’s this evolution of saying your CX programs are changing quickly, and the variability of the data coming in is happening so fast.

You have to be able to ask questions very quickly of that data and then. Trust what you’re getting back and that’s part of what we’re trying to overlay in our approach to AI. Calls, chats, transcripts, right? Seek. And you shall find there is so much data sitting in your conversations, whether that is an agentic agent that you’ve deployed, whether that’s an actual chat, [00:20:00] live session with a live agent.

If it’s a bot, they drive so much opportunity and it’s in the moment. It can be real time. It’s not a laggard to an experience. It’s a lot of times it’s in the moment of the experience and a lot of the disconnect that we see with a lot of the large customer base here at Medal is they’re analyzing part of their CX program over here, and then they’re analyzing part of their CX program over here and.

There creates this context gap between, so who’s right? Is it the people in the context center? Is it the people in running the CX program? Is it the digital team? And the reality is everybody has some sort sense of truth. But our recommendation where you start to double down on ROI and creating better experiences is by bringing that together.

You shouldn’t be kept from data that matters to you in your program. I used to say, I wanna be able to reach my arm into the contact center and ask for that data. [00:21:00] Do what I want with it. Ask the questions of that data that matter to me without disrupting your day-to-day. I don’t wanna disrupt the day-to-day contact center team.

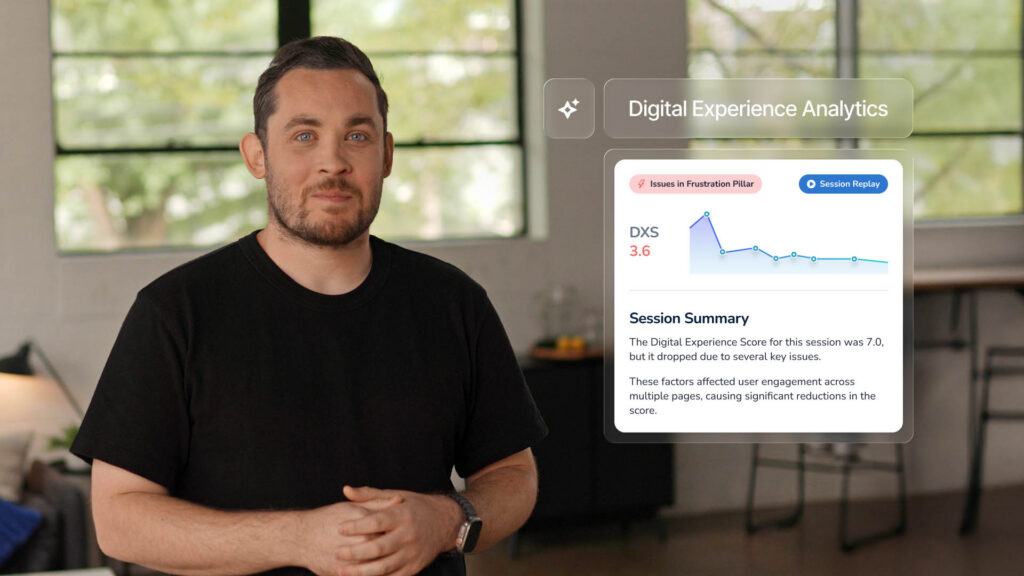

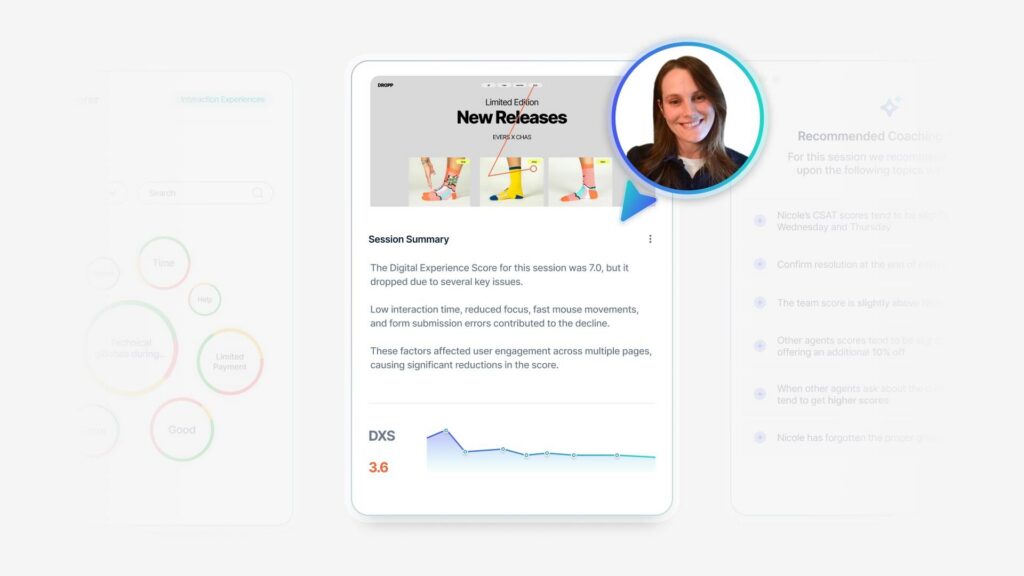

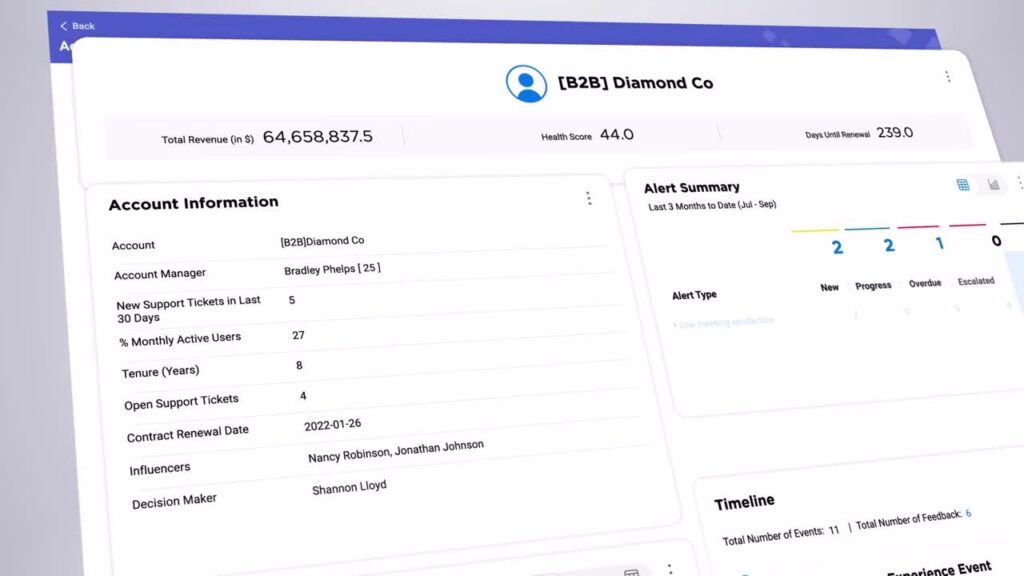

I wanna make sure they can execute. But what I wanna be able to do is make sure that everything that resulted in that call, all of those people get the information that they need. How are we Medallia, right? Overlaying this, like what you’re seeing right here is an example of a phone call. Okay? At the top, you’re starting to see when people are speaking.

It’s a little bit about the talk track. You could play the call if you needed to, but on the left are topics and sentiments. In the middle is the conversation, the back and forth with some of the enrichments, right? We started out, and this industry, when we started looking at conversations was, I need to understand what people are saying.

I need to create the same tags I would from my survey program to make sure I can an group that data together to get a single source of truth. This is where your, the true voice of customer is, right? They’re actually talking to you, [00:22:00] they’re engaging with you. So why shouldn’t I listen to it in with the same efficacy, in the same passion I had with my surveys?

So when you start thinking about, okay, this ends up being a lot of data. You see a lot of topics there at the bottom. So we’re doing the same thing that you just saw on Amazon. In terms of, that’s the expectation of creating that storyline. And then you see, okay, here’s the call recording that comes from the CASC AEs.

Were built for a very specific purpose. Answer phones, execute call typically as fast as possible. Not always the case. There are some people that like to chat, but time is money. There’s a, it’s a phrase for a reason, so they wanna make sure if you are calling that. If the call is handled, but they typically have to handle more than one interaction at a time, it’s a tough job to answer the phone and not know if they’re gonna get yelled at, et cetera.

But so A-A-C-A-S team is focused on making [00:23:00] sure that an agent has the tools to answer the call, route it for workflow. Hang up the call. So they’ve spent their time ensuring that part of the equation works, which is great, but it’s not with CX in mind, it is not going to allow you the flexibility and the accessibility to the data.

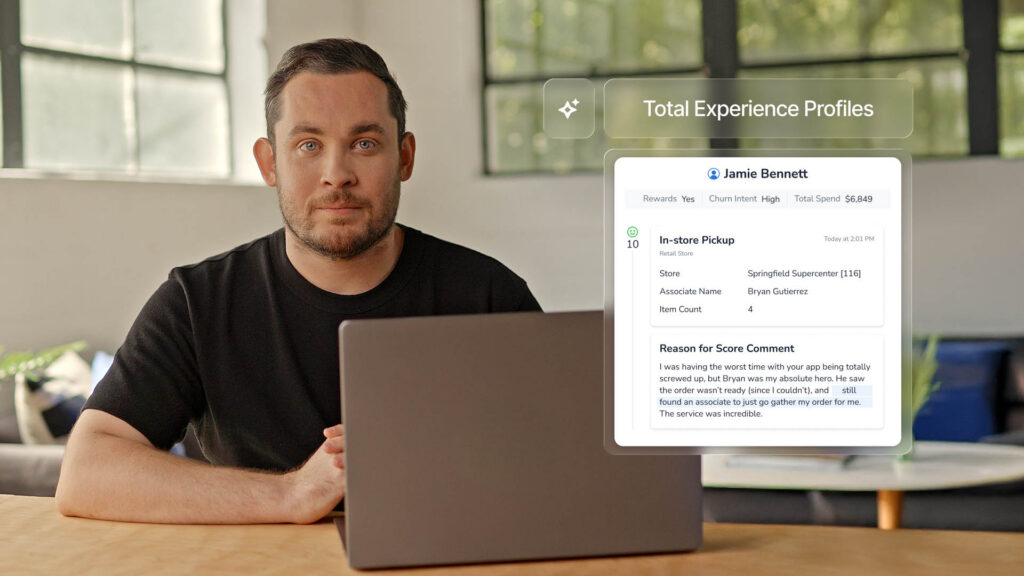

Taking that same call into Medallia would allow for the rest of the business and I’m in the business of making sure the entire enterprise can get access to that information without disrupting the rest of the story of what’s needed. The rest of this call, we start looking at here’s all the metadata from, so we can still have that metadata.

Why does metadata matter? Metadata helps us create segmentation metadata helps us understand where that call came from. Maybe start to build a profile around Courtney every time she calls and nags me, et cetera, right? So these are the types of things that, who was the agent that supported me? Was there anything agentic that led to the live agent first?[00:24:00]

Where should I learn there? Start thinking through that. What this helps me do is start to build a profile of my end customer so that they know who I am, right? So every interaction gets collected. I start to think about the all of my customers as a whole, but I still have a one-to-one map to working with Courtney that starts to get developed.

With this metadata, here was the call. Again, we highlighted this a little bit. As I started going through, like I’m looking for sentiment. I am redacting things that my CX team doesn’t need to see. They don’t need to see the credit card numbers, the phone numbers, the account numbers. What matters to the CX team, the product team, the marketing team, et cetera, is what was mentioned during that call to where I can make it a better digital experience.

Next time I can use that. Soundbite for a product enhancement or even a promotional element to the product, right? So again, I said this earlier, it’s not always about looking for the negative. What about looking at for the positive? [00:25:00] Years ago when I started looking at call data for the very first time, it was where are we failing?

Are we failing? You? Just, you brought that up. Where am I failing the customer in the journey? Where am I succeeding? Why don’t I amplify that? If you mentioned something in the beginning of the call versus waiting to the end, I at a 35% return on that adoption of that ask. So when you start looking back, what I’m doing with the phone call, sometimes it’s about making it a better CX journey.

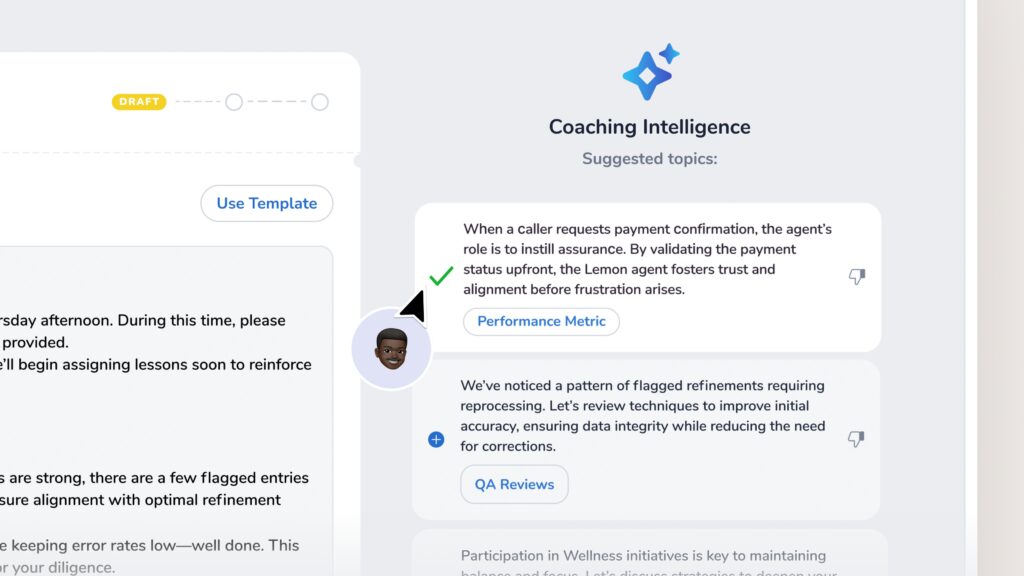

Sometimes, why can’t the business outcome be, how do I grow revenue? How do I train my people, my agents, whomever, to get more out of that moment? If you are spending one-on-one time with me, let me get the most outta that moment I can get. So we want to retrain, how do we ask questions of the data, which means let me make sure I tag the data for the purpose that matters for me, which means making sure we apply our topic sets to.

Get the right information and to keep evolving, layering in AI to let me engage with it right over time. That’s why the investments [00:26:00] and you saw on stage today was, can I build topics, sets faster? Can AI help encourage me? ’cause this is what I see as a winning formula. Can I engage with it in a conversational way?

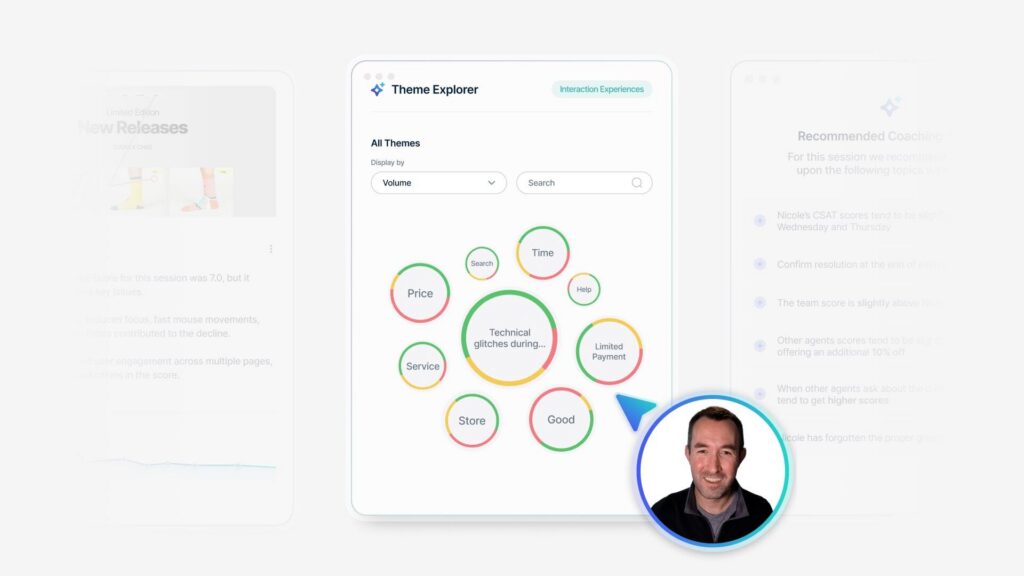

So they don’t have to think about charts and diagrams right now. I’m just trying to find out. When you start thinking about how we start to organize data, we always start day one to say, okay, what are the top call reasons for, what are the top chat reasons, right? What we’re trying to do is.

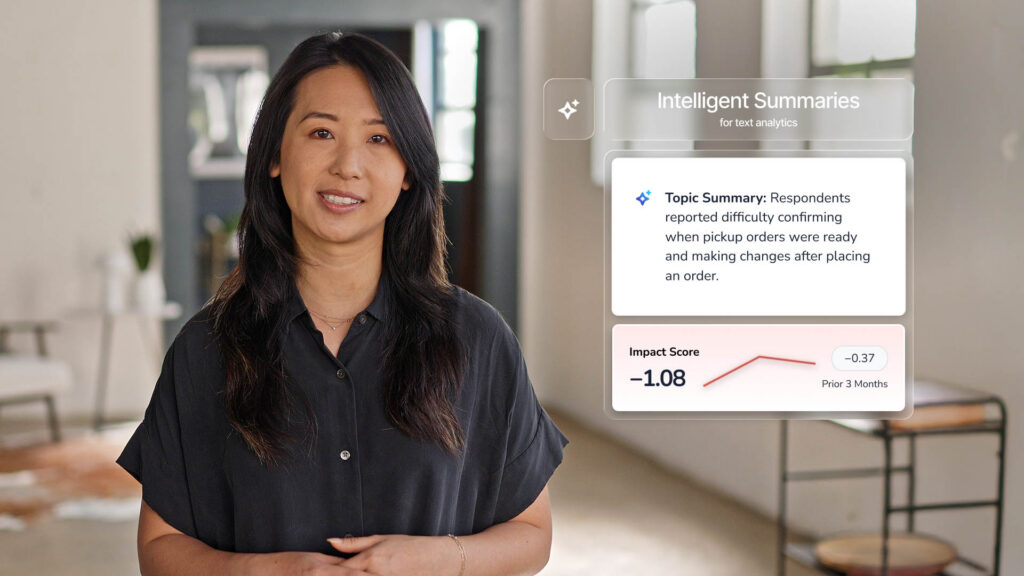

Pull this stuff together and a lot of our booths, right? They’re gonna show you, if you see this live, how I can click on, you see these little summary view in that second column here, right? So across all verified payments, when people are contacting me about verifying payments, I can see the summaries. And the summaries are gonna tell me.

What was the feedback on that? What was the intent? Why are people even talking about verifying payment? And then I can see, was that easy? Was that hard? Was that something that [00:27:00] broke down digitally? Couldn’t look at, had to call couldn’t look it up online. That’s frustrating. I don’t have to call.

I really don’t. I’m a busy person. I’m usually in my car. When I wanna find, so those types of things. So this is where you start to say, we’re overlaying this so to where you don’t have to dig for it anymore, but every summary that you see here as you start to go through it, here’s the example, right?

It was a, I’m gonna tell you how it was generated. B I’m gonna give you a summary. And this summary is. Taking a little bit of a mad lib approach as well. It’s like respondents reported this, here was the pain, here was the understanding associated with fees. Some individuals encountered issues with activating newly opened accounts.

It’s literally going through and prompting for common questions that you’re gonna ask, and then if I need to know more, I can always open that up and go into root cause cyst. Now, break that down for me. That was a great summary, but let me know more. Who were those new customers were? Was it customers that were within the first three months, customers that were a [00:28:00] year old, those types of things.

Ultimately, embracing AI within medal is giving you the same accessibility you use in your everyday life today, shopping online. Think looking for that type of information to make your own personal decisions is the same that we want to help you and encourage you to do in your CX programs today. So accessibility.

Is empowerment to action, and as we invest on getting you closer to the right action, we hope that you invest in communicating with us of. Here’s what I need to be empowered. I need this question answered. I need to organize my data in this way. We’re leaning into accessibility, but we also wanna provide you defensible controls, right?

So that’s why we pre-pro, we give you those summarizations, but then it’s backed by the data in the chart that’s right there. If someone challenges that summary, how many data points gave you that summary? Do I need the [00:29:00] data points for 2000 entries, 5,000 entries for it to be meaningful? Or do I just need to know what’s happened in the last week, the last couple days?

Is this going up? Is this going down? Those are different decisions and all of these things, depending on how you wanna set things up become powerful for you in your programs, what have we learned? Summarization is ambient. It’s expected. It’s not something you have to run. It’s gonna be always on.

In the Medallia system, give me the shortcut. I’ve set it up. These are the common questions I know I’m asked, so I wanna be prepared and I wanna have answers. Or two, two or three more questions at the same time, I wanna be able to look at something and answer as many questions as I possibly can, but then the system carries the burden, like users don’t have to perfect the question.

My prompt versus Abby’s prompt versus your prompt. I don’t have to worry about that because I’ve given them the data, I’ve given them the prompts to answer the common questions, and then eventually. I might empower certain people in my [00:30:00] organization as we launch. How do I engage with the data in a more freeform way and ask all these questions like what Sid and I were doing earlier with the same data set, right?

He asked questions that were pertinent to him, and the more I learn what types of questions he wants, guess what that means. The more I now know. The questions that he’s gonna come to me so I can preempt that next time. These are the things that we want to help your programs with, right? So that it’s not so unwieldy bringing in contact center data.

It’s not so unwieldy bringing in that chat data with your survey data. If anything, I do believe that the more I understand that data, the better my ask when I do ask for feedback. Could be, it could be more targeted, it could be more prescriptive, it could be launched at the right time. I don’t need 50% of people to respond to me.

I just need a handful because I’m testing a hypothesis and a theory, and then I can make sure I get the right type of feedback, right? Trust beats cleverness all day, right? So I wanna be curious and creative with my ask of the data, but I [00:31:00] wanna trust when I get back. Just because I ask the question in a very kitschy way doesn’t mean I want the data to somehow be manipulated so that.

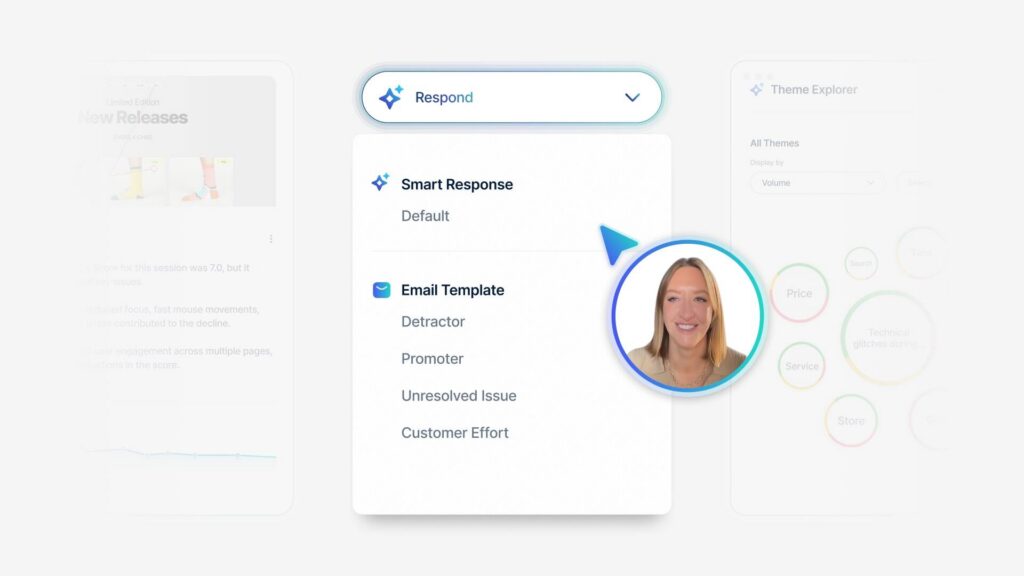

It works in my favor versus your favor. So that’s why we wanna be grounded in truth. We wanna make sure when you do see root cause assist topic summaries, when you start to get smart response we start thinking through the voice of who you are to make sure that AI is being deployed in a way that fits your organization, that doesn’t have two people in your team get different answers.

To the same question, and it’s something that you can go back to say, okay, we didn’t waste our time, and if I need to dig deeper, I can dig deeper, but at least we’re all starting from the same place, bringing that standard to cx, right? We wanna apply the same pattern to customer conversations. We wanna make sure that we are not afraid of looking at that data and it’s on its own, but in the collective, we are constantly designing how we deliver.

[00:32:00] What we deploy for each of you and the way that we help you set up your programs to keep you pushing more towards the modern edge of what you need, right? We don’t want your programs to be stagnant. We wanna lean in the new ELT that joined MEA last year. I worked with them. I joined, I worked with them for the last 15 plus years, and it’s coming in with a fresh set of eyes now with all this new technology and thinking, wait.

How do we come in and make this even bigger and better, and how do we make sure people feel comfortable with what they see? The more questions you ask us, the stronger we’re gonna get to make sure we deliver on your ask. So what on Amazon, how do you work with that? How do you see this in the same, your data at Medallia as well, where does AI help you today or does it make it harder?

Audience Participant 4: What I find sometimes is that I’m. Less close to the feedback than I was before when I was the one reading through a hundred comments from our, closed loop program for the [00:33:00] previous month.

Courtney Shealy: But it’s a lot of pressure. It’s, am I missing the forest for the trees for that? Did that summer, did I just rely on the summarization to make my decision?

Or can that summarization point me in a direction to then say, okay, now I need to go. Answer how often? I think of it as what did I identify, what did I quantify? And then what’s being recommended, right? And if I apply that logic to just about anything, when you start thinking through, okay, I identified this, or here’s my stat, but what’s the frequency of that?

And then the frequency of that happening. Was the sample size really small? Was it just a particular set? Like I have to ask, I have to investigate a couple questions of that. And then the right action could be very different. Depending on the segment, depending on the product, depending on if this was a brand new product we launched, I expect a lot of issues and bugs and dah, like for example.

But if it’s something that is been tenured, adopted, why am I seeing a drop off? Is it because it’s not keeping up with expectations, is it no longer the favorite thing? Those types of [00:34:00] things too. So

Audience Participant 5: yeah, I think the other element of that is if I’m continuing to ask the same question over and over in Insights assistant.

Should that really be a report? Many of you guys have programs that are 5, 6, 7 years old. Are we watching those engagement metrics? Are they still answering the questions that are relevant today? And am I using the right tool set? Is it really time for a reporting refresh?

Audience Participant 6: Yep.

Audience Participant 5: Versus, Hey, I’m gonna get a quick answer to this question.

I’m curious about so making sure we’re considering that.

Audience Participant 6: From a a summarization perspective, part of the challenge is the sanitization of the information. Taking her example, she read the a hundred cases, there’s gonna be sophisticated customers that actually know the error code and might mention it in 10, 10 of those cases, right?

Audience Participant 5: And.

Audience Participant 6: It’s not gonna be embedded or it’s not gonna be uplevel that error code potentially by the machine learning. ’cause it wasn’t representative enough in the data set. Correct. So that’s one challenge is bringing the overly sanitized data back to like product teams and they’re like, I can’t do anything with that.

And so it’s [00:35:00] needing to drill down into the nuances. ’cause the up-level data rarely will influence a product team to take. To take something off their backlog because you brought them this high level problem.

So that’s one of the challenges I see with the, that we’re seeing with it.

With ai.

Courtney Shealy: With the ai. That’s great. It’s interesting that you bring that up. I had a customer product manufacturer, vacuums, just gonna say they were vacuums, which by the way wasn’t Dyson actually competitor Shark Ninja. But but what was interesting, and this was years ago, what I liked was. First lesson in sentiment.

The word suck for a vacuum is positive people. It is not negative, so you gotta do a little tuning there. So it was a fun, I was like, why is everything red? I was like, it’s supposed to be doing this. So it was a lot of changes. But what was interesting with them, and the reason why I’m opening this up is, and they taught me that a lot of times when you look at products and the error codes and looking for where the issues might be, there were certain product families that mattered way more to them.

Than others because it’s where they invest all their dollars. It’s where they, all the [00:36:00] commercials, the thing, they’re in the skew families that are the elite. When you see those commercials spilling the milk with all the Cheerios and stuff, they’re getting them right. So those are the things that really matter to them.

And so I take that approach now to customers to say, are there product families that matter most? Let go, isolate down my topic set to support that particular suite of products So that. I do get more information. AI and the summarization is not going to de diminish it because it’s not that important anymore.

It is tied to where we get most of our revenue. It is tied to where we are planning on investing and growing a certain market se segment, those types of things. So I found it interesting that sometimes you have to play around with how. Where are you going as a company? Those three outcomes that I mentioned earlier is what are those targets?

I need to know what those targets are because then we might make recommendations to say, let’s build a new, it could be a new report, but it could be a new topic set. It could be a new overlay or prompt to the AI to make it fit the needs that you have, [00:37:00] right? To make the right recommendations. Go see the booth.

You can see a lot of these things live, right? You can see. Session, if you want more questions, we wanna make sure we show up when we’re having meaningful conversations with you. So if this is a challenge you’re seeing with AI or using conversational data in your programs, put it here. We’ll help make sure we address it.

Happy to come talk to you personally and or, just make sure that we show up talking about that more frequently so that if you are shy and don’t wanna ask those questions and don’t like a mic on your, it’s fine. It’ll work out. So thank you all for your time though. Appreciate it.